Computers are complex collections of circuitry designed to offload some of our thinking to external tools. They are tools that extend and integrate with our conscious experience.

High performance computing – a label that for much of its history has been a moving target – has become the norm. What was once thought impossible to build in even large spaces now sit in our purses, performing billions of cycles per second.

In this landscape of high performance compute, is 8-bit computing not obsolete? What purpose, besides legacy software support or retrogaming could 8-bit Instruction Set Architectures (ISAs) serve? Why are computer scientists and end users engaging with such relics of computing history to this day?

While many use cases for 8-bit compute remain for both devs and end users in the current embedded space, even these devices are being challenged by 32-bit microcontrollers with similar or improved performance; including in ultra low-power devices. Despite this, it seems that several 8-bit ISAs will remain relevant well beyond our lifetime, at time of writing. Many of these 8-bit embedded use cases remain invisible to end users, though, who do not typically interface with the electrical components of embedded products.

There are, however, many less discussed reasons to return to and understand our computing roots in the 8-bit landscape. Hopefully the arguments presented herein will persuade some readers to join those of us exploring this landscape from a modern perspective.

The World of High Performance Computing

As previously stated, high-performance computing is a bit of a moving target. At first, any electrical computer was a high-performance computer; when compared to human computer pools and mechanical computer designs.

As architecture design evolved alongside the development of Integrated Circuit (IC) technology, 8-bit computing platforms became the new, high-performance standard. The relatively short-lived 16-bit era was followed soon by the 32-bit and 64-bit era, which represents today's high-performance platforms. This, however, is not the whole story.

Computing Conventions and How They Limit Users

It is not so simple as saying that 8 and 16-bit computing were replaced by 32/64-bit platforms; rather, the adoption of certain technology standards by industries at large constitutes a collection of computing conventions: traditional standards technologists follow simply because they are a standard.

Conventions in computing are important as they create a set of tools that all stakeholders can standardize on. This improves interoperability and portability between platforms. The conventions we use today are driven by the mass purchasing decisions of corporate and government organizations during the early computing eras. We live within this legacy of capitalism and computing convention that has established our current standards. Standards like intel/AMD/x86 processor ISAs, OS standards like Windows, GNU/Linux, and MacOS, and bus protocols like PCIe and USB.

These standards allow us to create computing ecosystems that are highly supportable, but they also limit us to a specific set of tools. Fortunately, with enough knowledge, communities can create their own tools; and this is exactly what's happening today in the 8-bit community.

Much of computing history has been industry driven, but much of it has also been community driven. Community driven projects tend to focus on transparency and comprehensibility of their design; opening specifications, schematics, and software as part of their process. Free and Open Source Software (FOSS) and hardware groups are active in the 8-bit computing space because 8-bit computing is accessible, approachable, useful, and educational; common values of FOSS advocates.

When ISA's Competed

One benefit of a common computing convention is binary interoperability. When the first CPUs implemented on ICs became the norm, there was no expectation of interoperable software between different ISAs or even different platforms built around the same ISA.

Each of these non-compatible computer designs represent a different computing platform. In the early days, these platforms competed with one-another, each attracting their own adopters and developer communities.

These platforms succeeded or failed based on their level of adoption, mostly by businesses and governments but also by individual users. If enough of the business sector adopted a platform, it would often become a standard, regardless of its performance when compared to other platforms.

This is how the 6502 and 8088/8086 became early standards, followed by the z80, and eventually the i386 and subsequent x86 ISA releases we typically use today. The dominant ISA, in this case, is largely a result of industry adoption and development timing. Intel released the powerful i386 at the right time to see massive adoption early in its life, and this simple fact drives the standards we live by today.

Had Zilog, creators of the top 8-bit ISA of its day, released the 32-bit Z380 to market soon enough, we may be using z380 systems to this day, with Intel being a historical footnote or surviving microcontroller company like Zilog is today. Motorola also had a great opportunity to become the market leaders with the 68000 series of 16/32-bit processors, but failed to deliver on time and at scale; losing the trust of industry purchasers to deliver at all.

While many of the less successful 8-bit ISA's of the past are no longer manufactured, they are all still burdened by Intellectual Property (IP) rights. These chips have largely been reverse engineered and are well understood by the community, but they remain proprietarily licensed. That being said, some are still manufactured and available brand new.

Among these are the z80, z180, and 6502. There is also the eZ80, Zilog's latest microcontroller that has backwards compatibility with the Z80/180 when configured correctly. It vastly extends the capabilities of the ISA with built-in peripherals/timers/clocks and an enhanced addressing mode that allows it to access significantly more memory than previous renditions.

Update: Since time of writing, the Z80 and Z180 have, sadly, been discontinued. The eZ80, however, has a high level of compatibility, but is more difficult to physically work with as only surface mount components are available.

While it may make sense that these designs remain relevant for small-scale, embedded applications, what is less obvious is the tremendous value 8-bit platforms can provide as a day-to-day computing tool.

Why Compute in 8-bits?

What madperson would accept the limitations of an 8-bit computing platform? Retrocomputing is for a particular type of nerd in the eyes of the public, specifically, the retro gamer.

Most retro gamers use 8-bit tech to emulate old platforms with accuracy. They either run on a software emulator, re-build the platform design in real hardware, or use an fpga to approximate the real hardware. Accuracy to the original experience is paramount to this community. As a result, many retrocomputer platforms known by the public are antiquated in their design, even when built with brand new components.

A few significant facts about computing are good to keep in mind here. First, modern computers are designed the same way, and work in the same ways as retro, 8-bit computers. 8-bit computers are modern systems and, while we've implemented more features, bus systems, and peripherals on 32-bit and 64-bit platforms, that doesn't change the fact that the design is universal. The Hermetic Principle of Correspondence is in play here; As Above So Below.

32-bit and 64-bit CPUs and MCUs have many electrical lines that need to be controlled, which can make them difficult to understand; often requiring specialized tooling to interact with at the lowest levels. 8-bit CPUs and MCUs, on the other hand, have relatively few electrical lines that need to be controlled. This allows a user to explore the deepest depth of the platform's design more easily. Visualizing the circuits and understanding them is simpler. Making physical connections with hand soldering or simple probes is easier; in turn making circuit design and troubleshooting easier.

This means 8-bit computing is a proven foundation for understanding the more complex connections, peripherals, and protocols that make up today's high-performance suite of platforms.

During the days of 8-bit computing as high-performance computing, many concessions had to be made to keep systems affordable, leading to limitations in these platforms that are the result of sacrifices. Typically, today, these components are inexpensive enough that we simply do not need to live with these sacrifices anymore, making accurate emulation of old designs a niche that exists for retrogamers or those supporting ancient business appplications.

While these are good and valid reasons to pursue 8-bit computing, it remains a niche and very specific use case that leaves many of us in the 8-bit community frustrated; as we are interested in new and improved platform designs more than accurate recreation of outdated ones.

Fortunately, we have such machines. Some of the latest designs are so capable that they really put the 8-bit platforms of the past to shame. We have extended bus standards, improved IC manufacturing techniques that reduce power consumption, static ram chips are the norm, as are reflash-able EEPROMs for firmware. We have access to SD Cards and Compact Flash (CF) Cards for mass storage and we can still interact with IDE devices easily from 8-bit platforms. A time traveling computer scientist from the 8-bit era would be quite envious of the 8-bit options we have today.

Defining User Friendly

From its inception, computer science has largely been a protected practice; gatekept behind governments, businesses, and educational institutions that could afford to engage with it. Circuit designers and manufacturers maintain strictly proprietary control over their designs while schematics and even some programming interfaces are kept secret behind closed documents. Underlying implementations are kept secret. Besides the problems we've already seen this approach cause (think Spectre,) this generates some systemic issues that affect how everyone engages with computing.

In the software space, projects like GNU, Linux, and the various licenses they created, use, or inspired, have done the work to protect access of computing tech to everyone but it could have played out very differently. Tight corporate control over computing was the norm until these groups came along. In the hardware space RISCV and to a lesser extent J-Core are working to ensure the same consumer rights for access to hardware designs and RISCV, in particular, stands as insurance that humanity can have free access to hardware well into the future.

When corporations are designing "user-friendly" tools, they are also defining what it means to be "user-friendly." The conventions that are adopted the most become the current "best practice." While some computing processes can objectively have a best practice, user interaction is not among them. What the user considers best is not ever going to be consistent from one user to the next.

Today we think of different types of computer users; Gamers, Business Users, Content Creators, Physics Scientists, Data Scientists, Software Devs, Casual Internet Browsers, and Systems Operators/Admins. The list probably goes on. We know that each of these user types have their own skillsets and each interacts with the system differently. This is a fairly new development enabled by modern Operating System (OS) abstractions.

For a long time there was only one type of computer user: The Programmer. To use a computer effectively one needed a deep understanding of the platform design and cpu ISA. The user must understand how to program the machine from a minimal execution environment, making them strong system operators and software designers. With the rise of CP/M and, later, DOS/Windows and GNU/Linux on x86 ISAs, these early systems became accessible to other types of users.

Today's computing conventions at large do not encourage us to dig deeply into the system, reinforcing a cycle of obfuscated design in professional tools.

This is not necessarily a bad thing. We should not require every user to understand the system fully in order to use it, but it has led to a culture in which the computer is a mysterious, magical box that only a few experts take the time to understand. This is a bad thing, as strong computer science foundations become increasingly important for common citizens to navigate life.

Most consumer expectation surrounding computer interaction has been shaped by corporate interests who profit from obfuscating the inner working of their platform. Not all abstraction is intended to ease user or programmer burden, some of it is used to increase the difficulty of reverse engineering. We should, therefore, be wary of user friendly design as it is prescriptive. We should be able to choose which designs are the most friendly to us for our use cases and be aware of what limitations choosing a prescriptive platform will impose on us.

How Computers Think

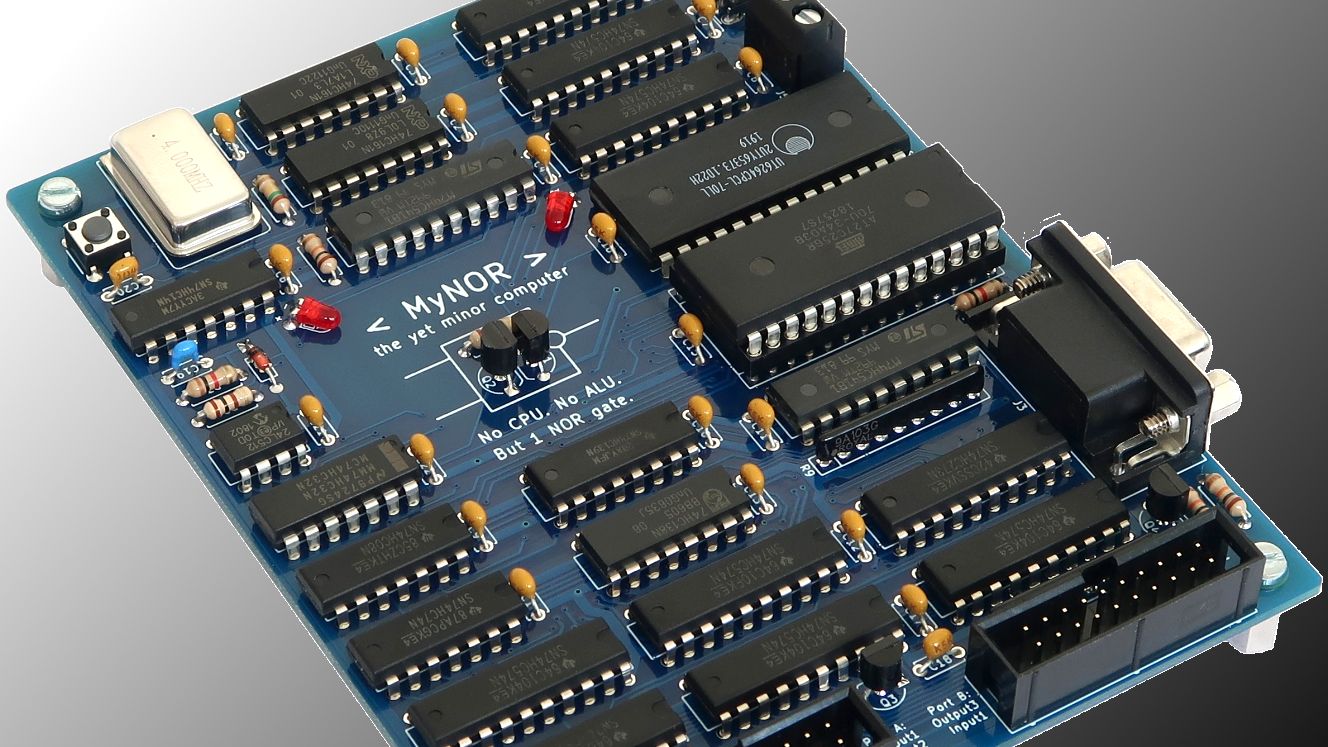

Computers are built from logical circuits, which are physical constructs created by connecting transistors together in specific patterns. A transistor is essentially an electrical on-off switch that can, itself, be controlled with an electrical signal. By arranging clusters of transistors and their inputs and outputs into certain patterns we can build machinery that implements boolean logical operations.

That's it. That's how computers think. Non-electrical computers are possible and predate electrical ones because these circuits represent physical structures which are capable of performing boolean calculations. We are able to use electrical lines to implement these structures at small sizes.

The science behind designing those structures, capable of performing boolean operations, is beyond the scope of this article. Different logical circuits are used to implement things like Arithmetic Logic Units (ALUs, an essential component of all CPUs) and other constructs in the CPU and peripheral ICs, but at a fundamental level it works like this:

We are given access to input and output conductors (the pins on the IC) which will carry an electrical signal when powered. We use the inputs to place certain combinations of on-off signals across those lines. The logical circuits are designed to respond to certain combinations with specific actions. This constitutes an Instruction.

Because binary can be used to easily represent the electrical states of on and off, we use binary to encode the state of those electrical lines into readable 1s and 0s. Because binary translates directly into hexadecimal notation, we typically represent these instructions in hex format.

Compilers and assemblers are configured to take human readable input and convert that input into these raw instructions, also called machine code. This is the code that the CPU executes from memory.

The CPU is designed to control all devices on the system. You give the CPU instructions and it issues instructions to peripheral devices on your behalf. This is typically handled through abstraction layers provided by the OS. It also handles requests from peripherals to perform work, typically using a system known as interrupts.

The Central Processing Unit (CPU) is the standard heart of modern computing, but it was not always. Early computers, particularly those predating ICs, implemented components typically associated with CPUs independently within a system. Indeed, it is still possible to design a computer with what is called "discrete logic" and there are modern examples available to build yourself.

Modern x86 Operating Systems (OSes,) with the exception of GNU/Linux and several of the BSD variants, do not provide the typical user with much insight into their design. This, too, is not necessarily a bad thing, but it's important to keep in mind. The vast majority of human-computer interaction is non-technical in nature, which leaves the population somewhat disadvantaged when it comes to things like cyber-security, online privacy, organizational surveillance, and other concerns associated with computing.

It is certainly possible for the average user to learn x86 assembly language, and many do. C is still a very popular choice and, though it is a high level language, it is considered low level by today's industry standards; requiring some amount of understanding of the underlying hardware to leverage effectively. These skills, rather than being considered fundamental to computer use, are considered niche. They are relegated to the realms of the obcure tinkerer or the industry expert; communities with an unsurprising amount of overlap.

It's worth noting that we increasingly see the less obscure "Makers" who tend to embrace embedded technologies of all types. Maker communities are definitely important for demystifying computing tech on many levels and bringing the popularity of both embedded development and 8-bit computing into more mainstream discussion. That being said, Makers is a broad term that applies to a tremendously large segment of the population, so take this note with a grain of salt. Not all Maker communities embrace that title and only some of those communities are deeply involved with 8-bit computing.

Modern 8-Bit: Corporate Control and the Tragedy of TI Education

8-bit computing never left us. It may not be our daily driver for work, school, or the internet, but modern 8-bit systems have been with us this entire time. While there are multiple manufacturers of note to discuss, this article will focus on TI Education; the branch of Texas Instruments responsible for their graphing calculator devices. These ludicrously capable pocket computers have a deep history of conflict between the company and its users surrounding right to access low-level computing tools.

TI Education continues the tradition of strict, corporate control over IP and internal designs for even its simplest of calculators. The z80, ez80, and even arm driven devices they continue to manufacture today are no exception. These calculators are renowned for their capable hardware yet notorious for their inaccessibility and lack of developer tools. In the simplest terms, the entire TI Graphing calculator lineup represent capable computing systems that have been intentionally hobbled to reduce user choice.

Ostensibly this is done to prevent cheating on standardized tests. It is arguable whether this is a sufficient reason to remove so much user choice. This judgement will be left as an exercise for the reader. It is worth noting that there is no real evidence to suggest that these measures actually prevent cheating.

The TI-8x platforms represent a ubiquitous, modern 8-bit computing platform that the vast majority of students in the U.S. and parts of Europe will be familiar with. In the U.S. it can often be required to have one of these devices to participate in standard coursework. Take note that these types of requirements are very much a result of the efforts of TI-Education to incorporate their devices into state-wide and nation-wide public school curricula. The U.S. education system largely has an enforced hegemony of TI-8x calculators, meaning the devices are ubiquitous and accessible to most.

Other brands like HP, Casio, Numworks, and Sharp are less common but increasingly popular in the U.S. In some parts of the world these brands are far more recognizable than TI.

At first this is exciting news. The power of advanced mathematics software on a capable computing platform in everyone's hand. How empowering! There is a nefarious effect to this level of user restriction, however, that impacts our culture at deeper levels than one might realize.

The problems start here:

- The TI-8x platforms uses DRM signing keys to lock its ROM, preventing OS customization and replacement.

- TI-Education has removed assembly language support from all systems, despite still publicly advertizing ASM support on every box.

- Hidden vector tables and license agreements prohibiting reverse engineering of binary and physical interfaces. It's worth noting that these clauses have proven unenforcible against the average calculator user, but may be enforcable against a business entity. This does not stop TI-Education from engaging in legal harassment of calculator users who participate in reverse engineering efforts publicly.

- Proprietary serial protocol. This is documented and easily implementable by the average programmer, but it reduces interoperability.

- Obfuscated circuit design, typically in the form of privately documented Application Specific Integrated Circuits (ASICs).

- Proprietary IDE which is no longer available. They'll give it to you if you ask nice and tell them you're an educator, but they guard it strictly.

- Even among those hardware/firmware revisions that still support assembly language programs without a hack or mod, there is no on-calc assembler. Machine language code must be manually entered into the BASIC editor and executed using a function. This eliminates the ability to use well structured subroutine design and certainly doesn't support preprocessor macros.

The hardware present in the TI-8x platform could make a powerful computer science education tool, accessible to and often required by most school children in North America, yet it limits users only to a set of specific, proprietary applications. This is a missed opportunity to incorporate low-level computing concepts into our basic, public school curricula. A device like this can be useful for every subject, not just math, in the right hands.

My love affair with computing started with the TI-82. At the time I felt frustrated with its limitations, some part of me knew this little computer could do so much more than it was allowed to. I can only imagine how much sooner I would have began my professional understanding of computing systems had I been able to use an assembly language editor, was provided with an IDE and toolchain to use freely, or otherwise had deeply documented hardware specifications and schematics.

Many tinkerers succeed in bypassing these limitations in various ways, but they still represent less-than-ideal workarounds at best. At the end of the day, there is a strong hunger for 8-bit computing access among students precisely because they find themselves holding computers that limit them. For the right entreprenuer, there is a growing opportunity to satisfy that hunger.

TI-Education is probably the most draconian in their level of hardware and software obfuscation, but most other companies follow suit. TI's latest offerings, featuring the eZ80 microcontrollers, are poorly designed and implemented. They are hobbled at every stage of their implementation, spectatularly failing to showcase the power of this amazing Microcontroller (MCU) family.

The lack of embracing the TI-Calc developer community has proven to be the true tragedy of TI-Education. It's harmed the entire educational community in ways that have a knock on effect on our entire population; reducing low level computer science literacy across the spectrum. Even expert computer scientists of today often struggle to grasp these low level subjects, having been raised on the conventional abstractions of x86 OSes.

By locking these tools so severely, corporate interests are restricting our access to ease of education. It takes advanced knowledge to workaround these artificial barriers, when it's even possible to do so. Workarounds are often rendered ineffective through mandatory firmware updates distributed by TI, often breaking entire libraries of user applications developed by the community. Every step of the way, TI-Education has fought against the community's right to compute with the devices they own, and sought to restrict access to low level functionality.

The severity of the impact can not be overstated. The most common computing tool in schools actively disempowers users to understand computing outside of the very limited scope of their proprietary software. This creates a false perception of the capabilities of the underlying tech that permeates our culture; causing us to dismiss it at obsolete. Most importantly, though, it reduces interest in access to low level computing concepts by putting up barriers to block users.

Moving Forward

So far this discussion has made a distinction between 8-bit computing and retrocomputing. To most the terms are synonymous and, in fact, the overlap between communities is pretty close to 100%, but it's a distinction worth pointing out.

As mentioned for retrogaming and legacy software support, retrocomputing generally seeks to restore existing retro-tech from the past, or recreate or emulate them accurately in software or hardware. Retrocomputing is largely about nostalgia, revisiting games, or for some running the business software they learned on to perform their daily work. Many readers will be surprised to learn how often legacy tools are used in modern computing.

There is a modern movement among 8-bit computing developers, though, to simply create the most usable 8-bit systems by today's standards. We not only have access to improved revisions of the original ISAs for z80, z180, and the 6502 and related chips (including their standard peripherals,) we also have them at significantly lower prices than devs of the past. Furthermore, we have access to Printed Circuit Board (PCB) services, allowing any home developer to create their own PCB designs manufactured at low costs in both small and large batches.

Things are starting to happen. While modern 8-bit computing still has a long way to go before it is particularly accessible to the average user, we now have access to a suite of some of the most capable 8-bit systems ever devised. This increased accessibility of development platforms has, unsurprisingly, led to an explosion in developer activity in the 8-bit computing space. With history as our guide, we know it is only a matter of time before this leads to increasingly usable 8-bit workstations, requiring less and less technical expertise to operate successfully.

Not only are new hardware platforms available, entirely new operating systems are available. Most modern platforms work with updated revisions of firmware like MS-BASIC or operating systems like CP/M. Some of these, like RomWBW, represent the cutting edge in development for CP/M, providing a multi-boot environment for supported systems. Other developers, however, are building entirely new operating systems using the latest design principals.

It is early days, yet, for the custom 8-bit OS, but the options are robust even this early. 8-bit systems are, after all, very approachable from a complexity perspective.

Modern 8-bit: The Platforms

What constitutes a platform? It can vary. At the lowest level the platform refers to the CPU ISA chosen, and the supporting chipset chosen to support that CPU. Modern x86 CPUs tend to need a collection of specific supporting co-processors or logical glue ICs that allow them to perform their work across the buses of the motherboard. These are embedded in the motherboard and referred to as the chipset. 8-bit computers don't, typically, have required chipsets, so in this case the platform refers more to the 8-bit ISA chosen as well the base set of provided peripherals.

Most of these designs are extensible, meaning you can change the platform, to include custom hardware, drivers, and firmware (operating environment.) Users are working to port new 8-bit operating systems across multiple platforms, but at present the supported OS is also often an integral part of the platform.

These 8-bit OSes can be considered interchangable across the computers discussed but, at time of writing, each board only officially works with the OS noted as supported.

- RC2014 - Supported OS: BASIC (various), CP/M (optional, requires CF card and memory upgrade), CollapseOS, Small Computer Monitor (SCM)

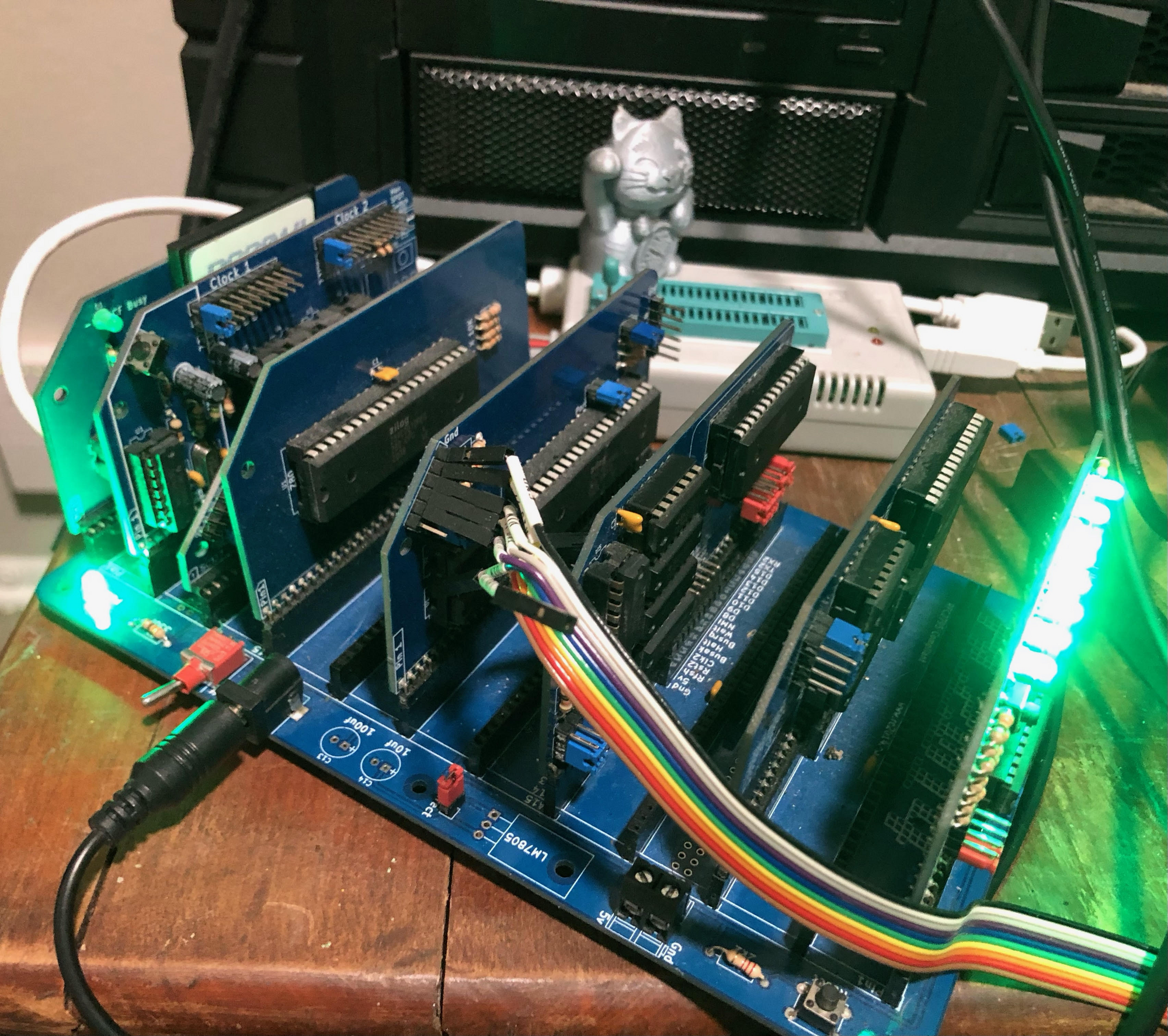

The RC2014 represents a pioneering release in modern 8-bit computer design. While not the first to implement a homebrew z80 platform in today's high-performance compute ecosystem, they were among the first to gain widespread popularity and commercial success.

The original RC2014 has been discontinued, but improved models have been released since, including a Pro edition. RC2014 is a modular design, providing a backplane with a standard bus layout and adapter boards for peripherals and cpu modules. This highly customizable design became such a staple in the modern 8-bit computing community that its bus design has been extended, twice, and formalized as a community standard. This is an example of a community developed computing convention in the 8-bit space.

A similar standard, the Z50Bus developed by LiNC, is a 50-pin bus system that was formalized prior to the adoption of the RCBus, and these buses are interoperable using an appropriate adapter.

The official RC2014 components can be purchased here: https://www.tindie.com/stores/semachthemonkey/ and here: https://z80kits.com

- Zeal-8-Bit Computer - Supported OS: Zeal-8-Bit OS

The Zeal-8-Bit Computer is a completely modern design built around z80. It implements a 22-bit address space with a simple, custom Memory Management Unit (MMU,) providing a full 4MB of address space to the CPU and peripherals. It is designed to run a custom operating system called Zeal-8-Bit OS which is still in early development. It aims to be familiar to Linux/Unix users with bash-like commands packed into limited memory space. It can be configured for platforms that lack the custom MMU logic, defaulting to a compatible 64kb address space and, as such, has been ported to other platforms. The OS itself can support up to 24-bit address space, making it easily portable to eZ80 devices, which support up to 16MB of address space in their ADL (Address Data Long) memory mode.

Zeal-8-Bit OS is a well structured, assembly language, single-user, single-tasking OS that inherits some design ideas from the Linux kernel and command line interface. It strives to improve accessibility to 8-bit computing through the support of modern assembly and C toolchains, relying on the popular z88dk cross-compiler as its own build toolchain. This provides access to full, feature rich ASM and C programming environments. The kernel implements system calls much like a modern kernel and behaves as expected by modern kernel and driver developers.

The Zeal-8-Bit Computer represents the cutting edge of modern 8-bit computing design.

Unlike most of the other computers mentioned here, the Zeal-8-Bit Computer is fully assembled out-of-box. It can be found here: https://www.tindie.com/stores/zeal8bit/

Update: A solder kit has since been made available for those who would prefer to assemble their own, by popular demand.

- Small Computer Central - Supported OS: RomWBW, SCM, BASIC (various,) Forth

Small Computer Central (SCC) is an amazing company that has done the bulk of the finest development in the 8-bit computing space to date. SCC is a prolific hardware developer, designer, and manufacturer. They specialize in Z80 and Z180 computer platforms running RomWBW; a modern, multiboot CP/M alternative with additional OS options that can be installed. SCM runs out-of-box on these systems with all z180 systems supporting RomWBW. Z80 systems typically ship with SCM, BASIC, and Forth.

Like the RC2014, these are modular systems. The oldest designs, which are retired, include custom bus connector layouts. The latest builds standardize on both the Z50Bus and the RCBus after they were formalized within the community.

SCC manufactures many models, with the SC500 series representing the state-of-the art Z50Bus designs and the SC700 series representing the same for the RCBus 80-pin platforms. You can find SCC computers here: https://www.tindie.com/stores/tindiescx/

- Agon Light 2 - Supported OS: Quark, Machine Operating System (MOS), CP/M, Zeal-8-Bit OS

The Agon Light 2 is an updated, open source revision of the original Agon Light; an eZ80 development platform running a custom OS called Quark. eZ80 is a microcontroller containing an enhanced, but backwards compatible, revision of the z80 instruction set. It also houses many peripherals that would otherwise have to be added to the bus.

It is very much like a system-on-a-chip, in many ways, and represents a complete computer in one IC. Of course it needs to be connected to the correct components and power supplies to operate and the Agon Light 2's design really showcases the ISA's latest capabilities. This board will vastly outperform any eZ80 TI calculator in terms of performance and programmability by orders of magnitude.

Unlike most of the other options here, the Agon Light 2 does not provide full access to the CPU system bus, but it does provide enough access to extend it quite dramatically. Many of the peripherals one might need those spare lines to implement are built directly into the eZ80 IC or onto the circuitboard itself. Like the Zeal-8-Bit Computer, this one comes fully assembled.

You can find the Agon Light 2 here: https://www.tindie.com/stores/agon/

- Commander X16 - Supported OS: Kernal

The Commander X16 is a redesign of the Commodore 64 with updated capabilities. It's somewhere between a retrocomputing design and a modern design, seeking compatibility with Commodore 64 architecture while improving it significantly.

This platform is the only one on this list, though not the only one to be developed, that uses the 6502 CPU. The vast majority of 8-bit developers today seem to standardize on Zilog ISA's for various reasons, but the 6502 is a perfectly capable 8-bit ISA that deserves its own chance to shine.

This platform absolutely delivers. While the platform is also in its early stages, it is very popular, making development boards scarce. Many boards were given away to hardware devs for validation and testing, and the popularity of and anticipation for the platform is robust. Emulation and dev tools are already freely available, as is the operating system, and the community has a large collection of software tools and toys ready to go and available for use today.

The Commander X16 is designed to be extensible and customizable, much like the previous platforms, providing full bus access. It uses a coprocessor to implement VGA support and multiple standard video modes; in this case a custom, on-board FPGA called the VERA is used to implement a GPU interface.

While the first run of boards has sold out, and there may be revisions before the next boards are printed, the Commander X16 project is just getting started, and we should expect more boards, and reduced footprint designs at lower costs in the near future. Watch the project here: https://www.commanderx16.com/

Conclusion

So what makes computing high-performance? Is it purely a count of cycles per second and bus communication speeds? Is it measured in bits per second or calculations per minute? We typically can use MIPS (Million Instruction Per Second) to determine a machine's performance at the highest level, but most ISAs do not perform a single instruction per cycle, so clock time does not directly translate to MIPS.

The complexity of bus communication timing means that even the fastest systems have speed limits in places; sometimes enforced by limitations of the bus design, sometimes caused by limitations of devices connected to the bus; sometimes other conditions occur that can delay processing time or even cause faults. This is true of every platform that exists, and it would surprise many readers just how performant or under-performant some of these designs can be when compared to one another.

Like with any project, choosing the right tools is a key factor in success. For many use cases, particularly low-level computer science education, 8-bit computing is an appropriate, affordable, and accessible tool. Let us also consider that many organizations have need for increased low-level technical skill.

Various 8-bit ISAs remain dominant in the embedded development world and, even where they aren't, learning the fundamentals on 8-bit microcontrollers translates directly to skill with 32-bit platforms. This increased interest in 8-bit computing across the population is generally, an empowering movement. In addition to all the reasons outlined so far, z80 and 6502 programming skills are still marketable and in demand; typically by employers who represent highly profitable organizations.

With the currently accelerating adoption of modern 8-bit development platforms, we may begin to see 8-bit consumer handhelds that are easily accessible to less technical users. Consumers who get their hands on modern 8-bit handhelds that grant them the freedom to explore, and the tools and documentation to learn their platform thoroughly, will quickly find themselves shocked at the juxtaposition with their tragic TI-8x handhelds.

For those concerned about connectivity to modern networks, it has proven trivial to add ethernet and wifi peripherals to any standard z80 or 6502 bus. The eZ80F91 series, Zilog's top-of-the-line eZ80 revision, includes an ethernet logic stack right on the chip.

Given that the eZ80 is the most viable choice for developing a modern 8-bit consumer handheld, this is a good option to have. For developers it also allows the use of bootloaders supporting PXE Boot, making firmware testing easier as ROM does not need to be reflashed each time. This not only can save time during development, it can reduce wear on the internal flash, which has a finite lifespan.

Assuming such consumer devices succeed in the public education markets then we will naturally see an increase in low-level computing literacy in the general population. It will be important to communicate expectations of these devices, that they are computers first and not designed for standardized tests. However, plenty of low cost, scientific calculators are available that can meet every exam's compliance requirements and every exam-taker's mathematical needs.

The graphing calculators allowed on many tests are overkill, as the functions test-takers need are typically just as usable on lower cost devices. Students often choose graphing calculators instead of scientific calculators because they are curious about the computing aspects of the device. Because of the enforced limitations on graphing calculator hardware and dev ecosystems, this inevitably leads to disappointment and disillusionment with 8-bit computing, generally.

A student who is happy with their 8-bit computer will likely not bother with a graphing calculator for exams, assuming graphing and mathematical software are available on the 8-bit computer. As long as the device can be used in classes, it can still become an important tool for students on-the-go.

It is important to note that such devices will be harshly competed against by TI-Education in the U.S. and may have a stronger chance of success in Eastern and European markets. The excellent Numworks calculator team is based in France and I believe this gave them a competitive edge for gaining adoption in the global education market. TI-Education has a strong presence in France, but it is not like the near monopoly they hold in the U.S.

That being said, I believe that new 8-bit computing designs have the potential to empower the working class tremendously. Providing the tools to demystify low level computing concepts during the course of K-12 public education would empower generations of working class citizens to design their own technology solutions to suit their own needs; even in a high scarcity environment. We'll discuss that more when we write about CollapseOS.

What else is in the future for 8-bit computing? A return of abandoned ISA's? It's worth noting that a genuine 6809 CPU is manufactured to this day, though the cost is high and supply much lower compared to the z80, z180, and 6502. It is also worth noting that the RCBus 80-pin standard can support many different ISAs, including 16-bit and possibly 32-bit CPUs.

Perhaps the community will develop our own open source, 8-bit ISA, in the spirit of RISC-V? Maybe RISC-V International will release a formal 8-bit specification based on existing compressed instructions? It would only be worth it if it saved dramatically on transistor count, but it would probably be the most viable option for an open source, 8-bit ISA.

These are all topics for future articles. Stay tuned...